Cyber AI Suite (CAIS)

AI-driven code generation is rapidly spreading across development teams — but governance and visibility often lag behind. Many organizations still lack clarity into which AI models developers are using, where MCP servers operate, or which portions of their codebase are AI-generated or externally sourced.

AI coding agents also introduce new attack surfaces, including prompt injections, data poisoning, transformers poisoning and other external manipulation. Meanwhile, legacy AppSec tools often struggle to analyze AI-generated code, leaving security teams with limited insight into how “black box” models influence production software.

What is Cyber AI Suite (CAIS)?

Followed with the following content: “As AI security concerns shift from theoretical to tangible, the threat landscape evolves rapidly. Corporate data is increasingly at risk of being ingested by third-party models unnoticed. AI-powered applications with internal access introduce new attack vectors, creating a blind spot where innovation outpaces governance.

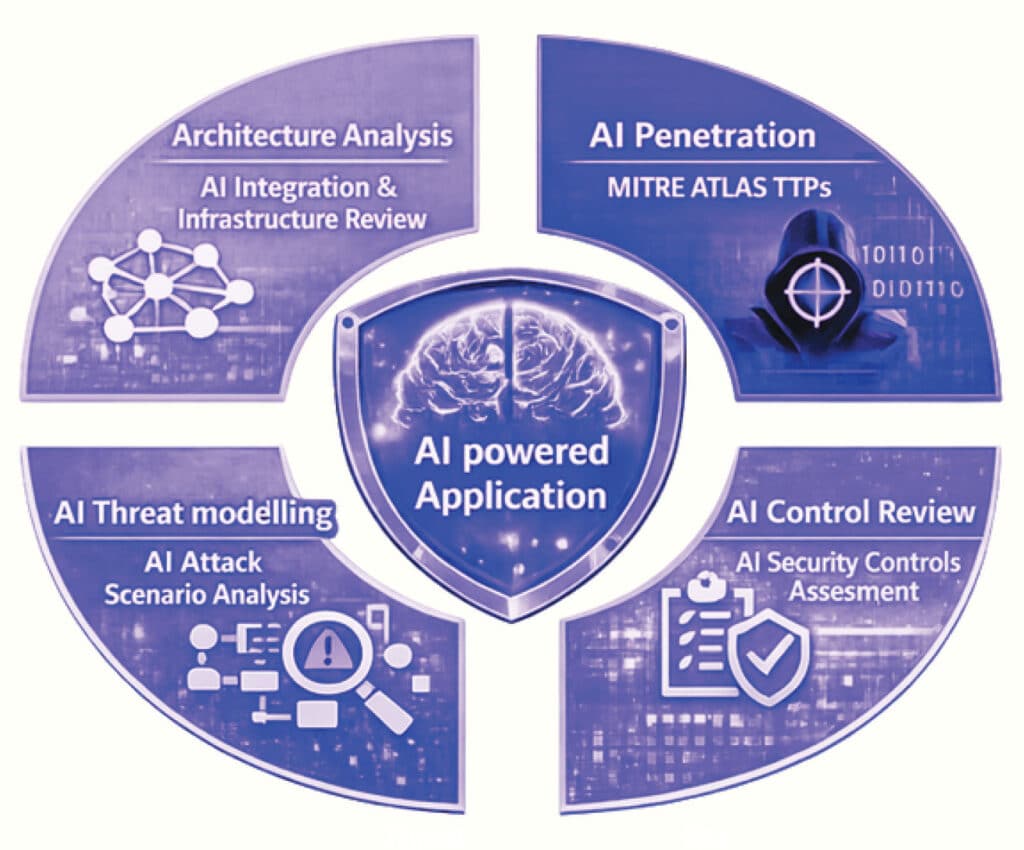

HolistiCyber’s Cyber AI Suite (CAIS) ensures the security of your AI innovations from the ground up. The CAIS service begins with a deep Architecture Review of your RAG pipelines and Vector Databases to identify structural risks. We then apply rigorous Threat Modelling to map potential logic flaws before our specialized AI Red Team actively stress-tests your defenses against adversarial attacks like prompt injection and jailbreaking based on MITRE ATLAS™ and OWASP Top 10 for LLM. Finally, we deliver a robust Governance framework, ensuring your AI systems remain compliant with global standards such as NIST AI RMF and ISO 42001”

Architecture & RAG Assessment

focusing on the technical and technology stack of the solution

AI Penetration Test

tests and simulates attacks that are AI-oriented using TTPs from MITRE ATLAS™ and OWASP Top 10 for LLM

Security Controls Assessment

(Agentic/Embedded AI) – Utilizing a proprietary AI Security Framework including MITRE ATLAS™, NIST AI RMF, and ISO 42001. This provides you with a quantifiable, board-ready metric that proves your compliance posture and prioritizes remediation where it matters most.

Threat Modeling

focuses on the attack vectors unique to AI. We identify new threat actors, vectors and the inherent changes on solutions as a result of the adaption of AI technologies.